On August 31st, 2019, classic alerts will be deprecated. As our project has a half dozen classic alerts, today we are going to upgrade them to the new alerts.

Running the migration

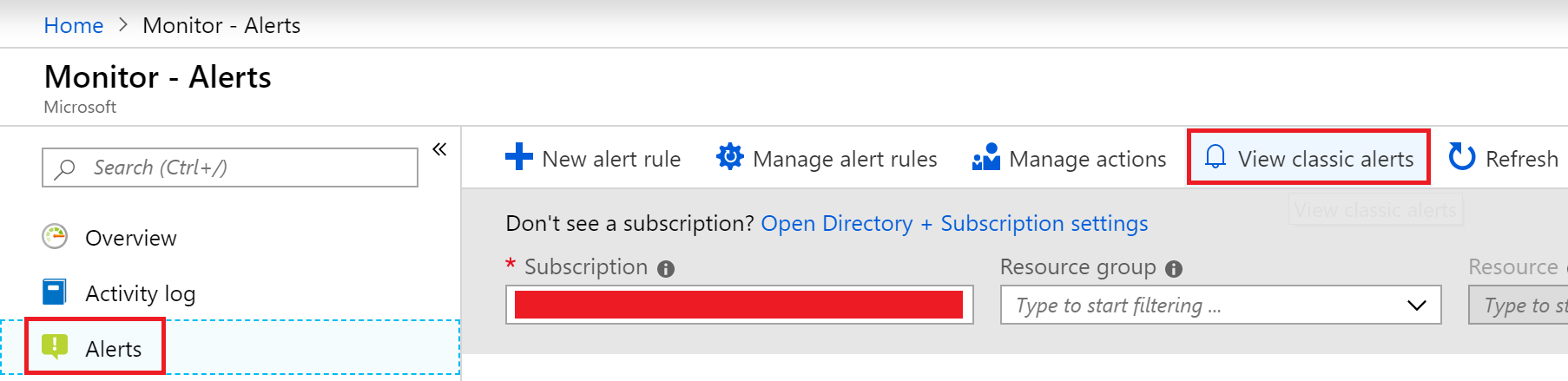

Fortunately, there is a migration process we can run. Note that once we run this on a subscription, the subscription cannot host “classic alerts” again. In our Azure Portal, we open “Monitor”, and then click on the alerts, and “View classic alerts”

We are presented a list of classic alerts. We click the “Migrate to new rules” button.

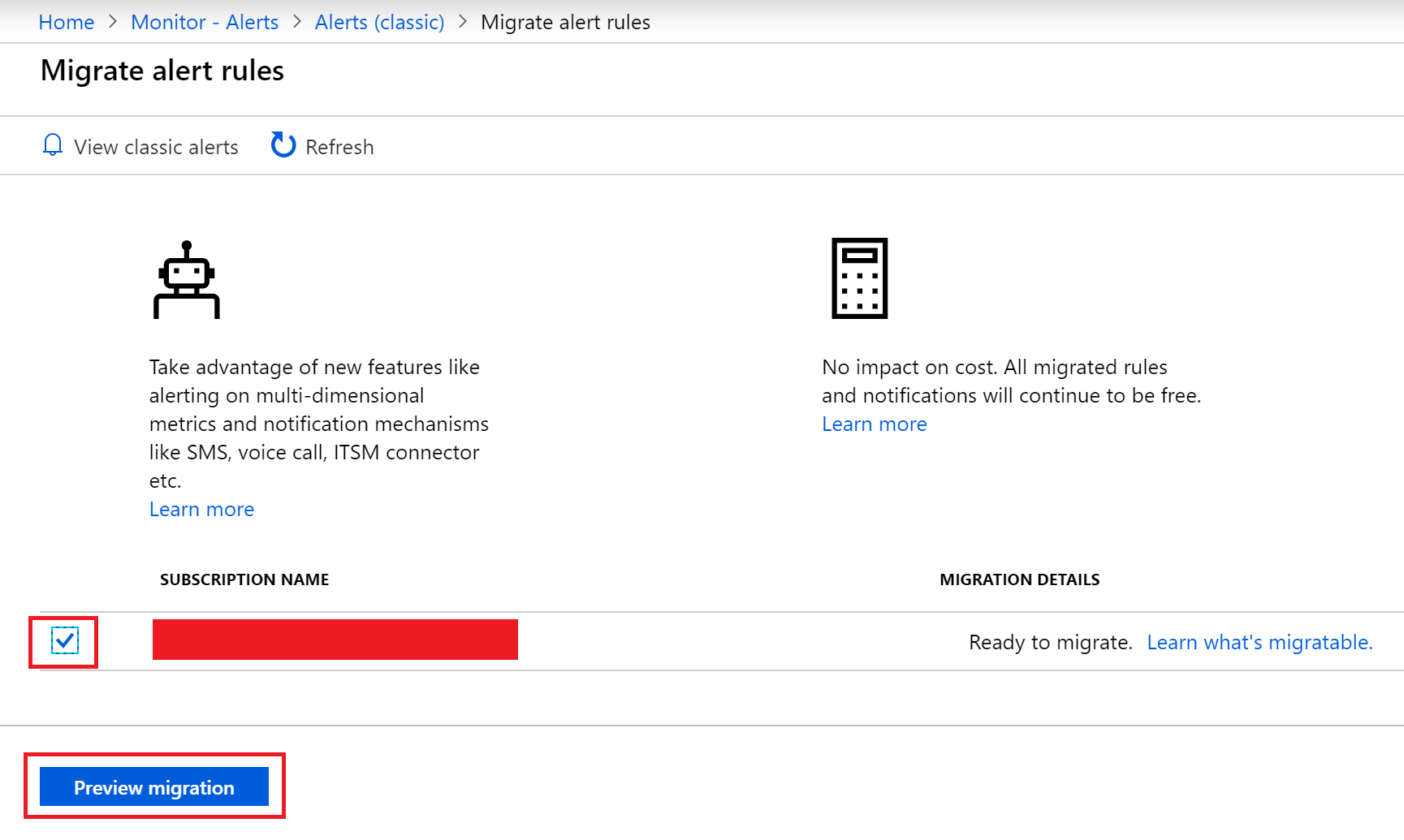

Now we select the subscription and click the “Preview Migration” button

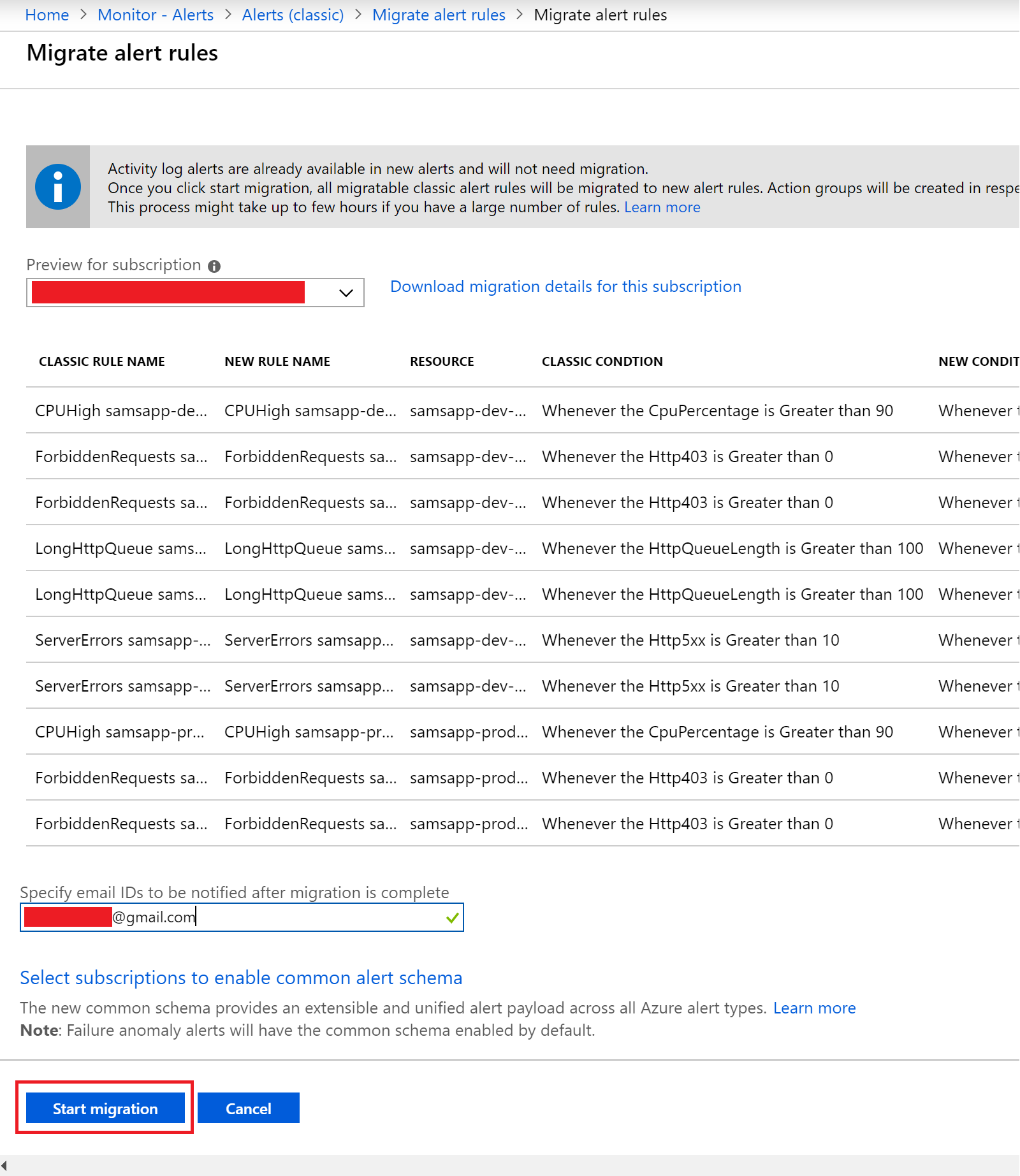

Finally, we add an email address for notification of the migration and then click the “Start migration” button.

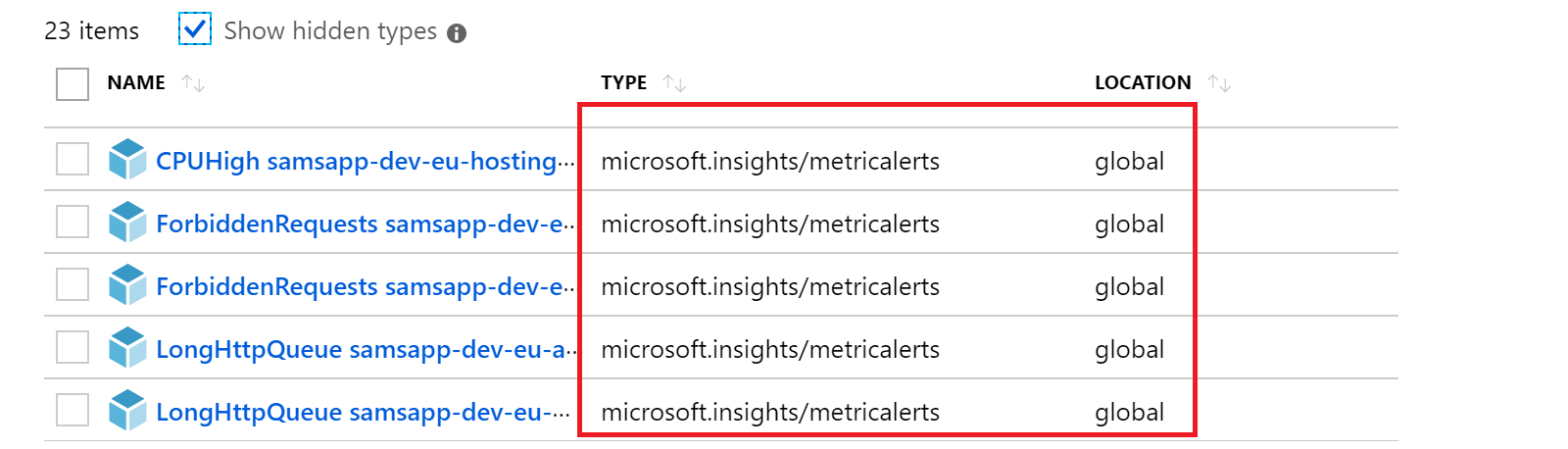

The migration starts, and after about ten minutes we receive an email that the migration was done. Looking in our resource group, we can see the new alerts, with the type “metricalerts”, and the location is now global, instead of being bound to a resource group.

Updating the ARM templates

As all of our classic alerts were defined in our ARM templates, we need to upgrade these too. First we find and identify the alerts in the ARM template, searching our solution for “Microsoft.Insights/alertrules”, we identify 5 alerts that need to be upgraded. We start the way we always do, and export the ARM templates to extract the new alert structure. There are two parts to this. The first is the action groups.

Action groups are configured in Azure Monitor and are used to notify users that an alert has been triggered . We are creating a single action group (per environment), targeted at an email address we are defining in the parameters. Our action group looks like this:

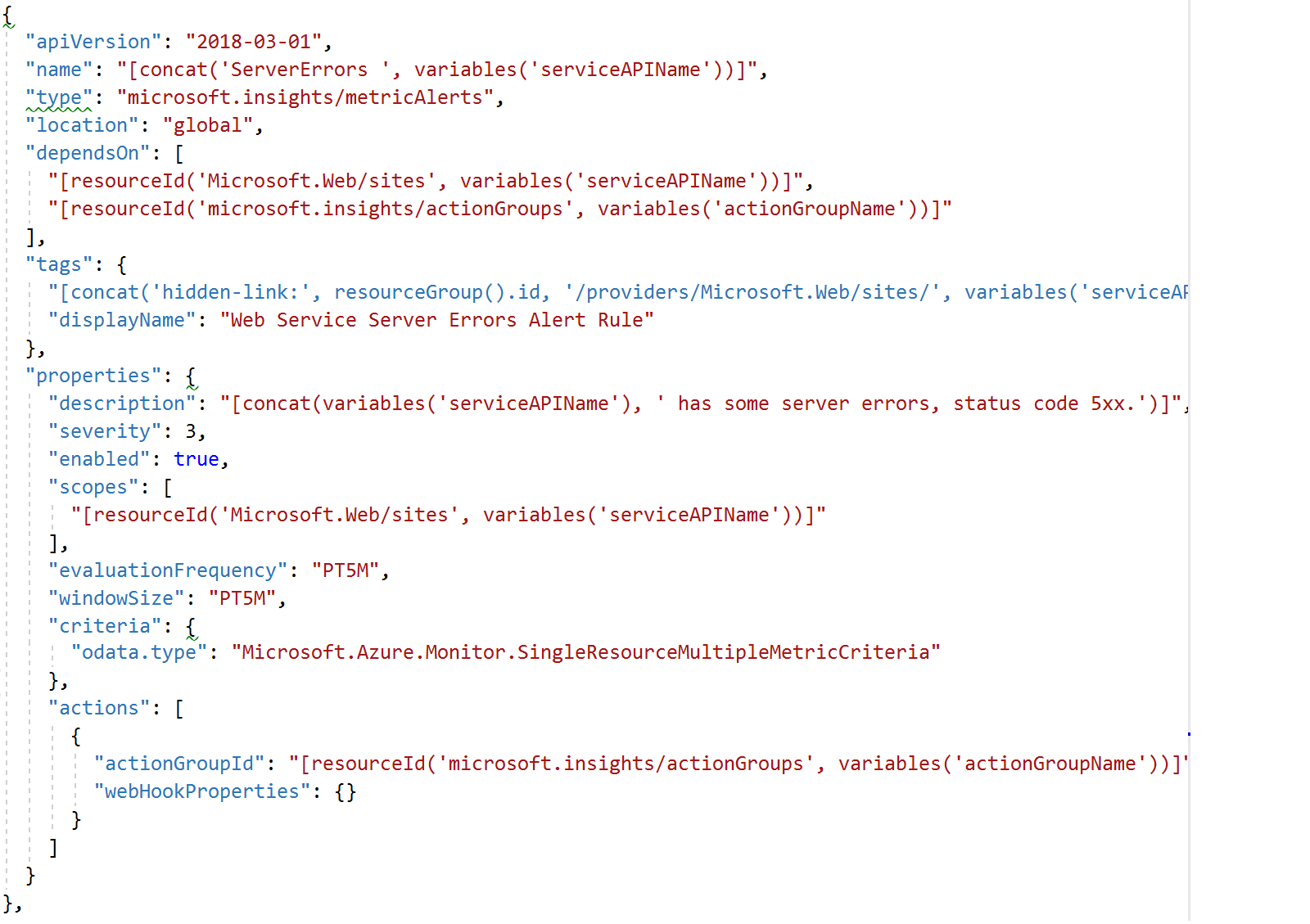

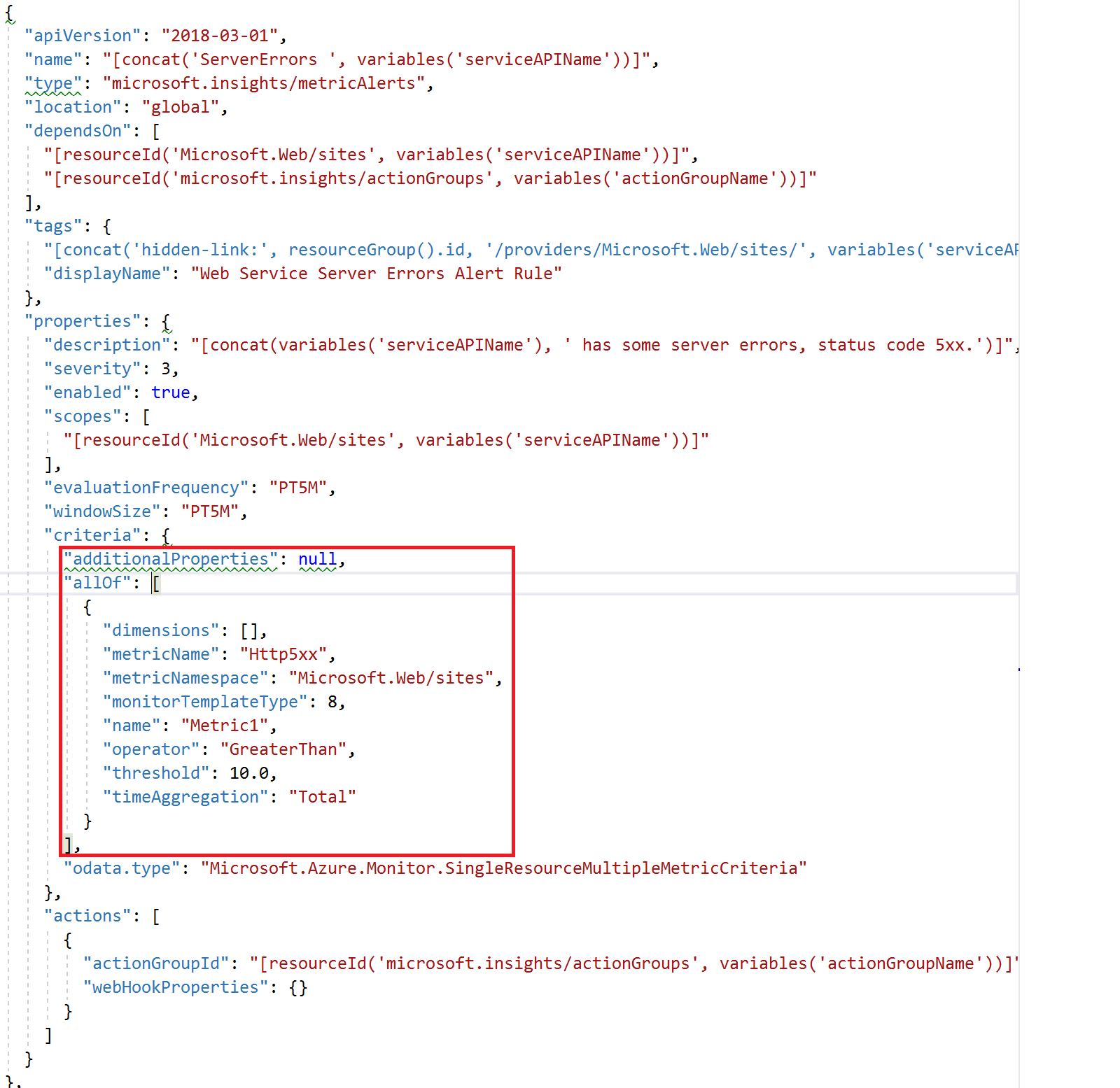

Next we need to create the new alerts, using the type “Microsoft.Insights/metricalerts”. We start by exporting the ARM templates from the portal from our upgraded/migrated alerts, and then integrating this new code into our ARM templates. Here is an example of the “server error” alert, which triggers if more than 10 HTTP 5xx errors are triggered within 5 minutes. Can you spot the missing piece that makes this broken? It stumped us for 4 days…

While we were stuck, our build would time out: every-single-time. We tried removing action groups (that doesn’t work, these are required), alerts (this worked, but then we had no alerts), and spent quite a bit of time comparing our ARM template code to the exported templates, as we weren’t receiving more information in the error than: ” The job running on agent Hosted Agent has exceeded the maximum execution time of 60″. The answer turned out to be a known issue in the Azure Portal export – it doesn’t currently export alert criteria. To get around this, we can use Azure Az PowerShell to extract this information:

az monitor metrics alert show --ids "/subscriptions/[REDACTED]/resourceGroups/SamLearnsAzureDev/providers/microsoft.insights/metricalerts/SamsAppServerErrorsAlert"

This works! Now we have the criteria and can successfully create and deploy our new alerts. See the modified alert ARM template code below

Wrap-up

Today we upgraded our alerts from the old “classic alerts” to the new alerts, and integrated them in our DevOps pipeline. We have five alerts that are visible in our repo if you search for “metricalerts” we are maintaining for each environment:

- High CPU (average of greater than 80% CPU in 15 minutes) on the hosting plan (e.g. web server)

- Server errors (average of more than 10 HTTP 5xx errors in 10 minutes) on the web application

- Server errors (average of more than 10 HTTP 5xx errors in 10 minutes) on the web service

- Forbidden errors (average of more than 0 HTTP 403 errors in 5 minutes) on the web application

- Forbidden errors (average of more than 0 HTTP 403 errors in 5 minutes) on the web service

References

- Migrating exiting classic alerts: https://docs.microsoft.com/en-us/azure/azure-monitor/platform/alerts-using-migration-tool

- Upgrading our ARM templates: https://docs.microsoft.com/en-us/azure/azure-monitor/platform/alerts-prepare-migration#api-changes

- Action groups: https://docs.microsoft.com/en-us/azure/azure-monitor/platform/action-groups#manage-your-action-groups

- Time outs: https://docs.microsoft.com/en-us/azure/devops/pipelines/process/phases?view=azure-devops&tabs=yaml#timeouts

- Metric Alerts: https://blog.kloud.com.au/2019/02/05/automating-azure-instrumentation-and-monitoring-part-4-metric-alerts/

- Azure Az commands: https://docs.microsoft.com/en-us/cli/azure/monitor/metrics/alert?view=azure-cli-latest#az-monitor-metrics-alert-show

- Featured image credit: https://www.hostpapa.ca/blog/wp-content/uploads/2017/02/Screen-Shot-2017-02-09-at-3.29.42-PM-min.png

2 comments