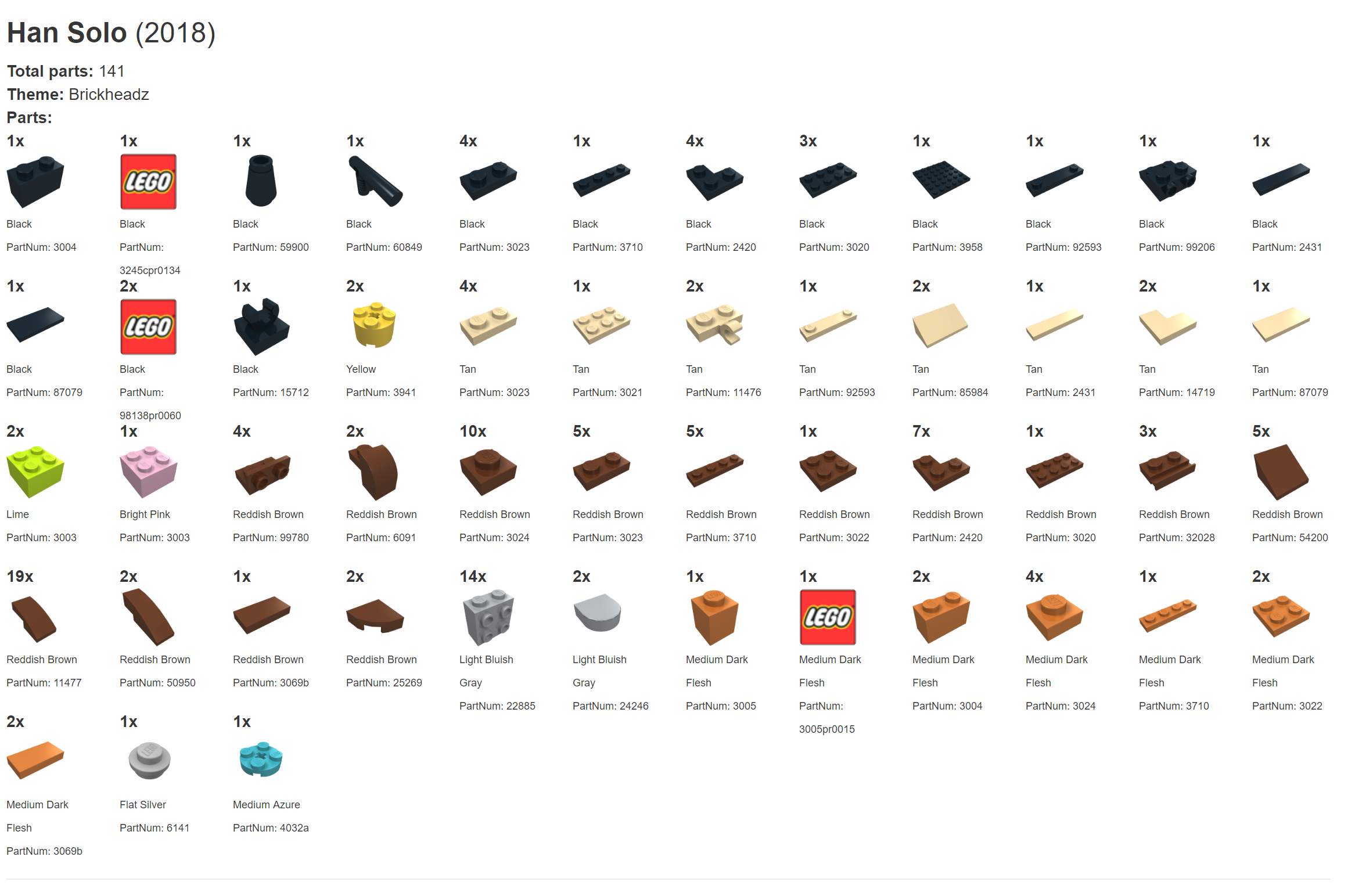

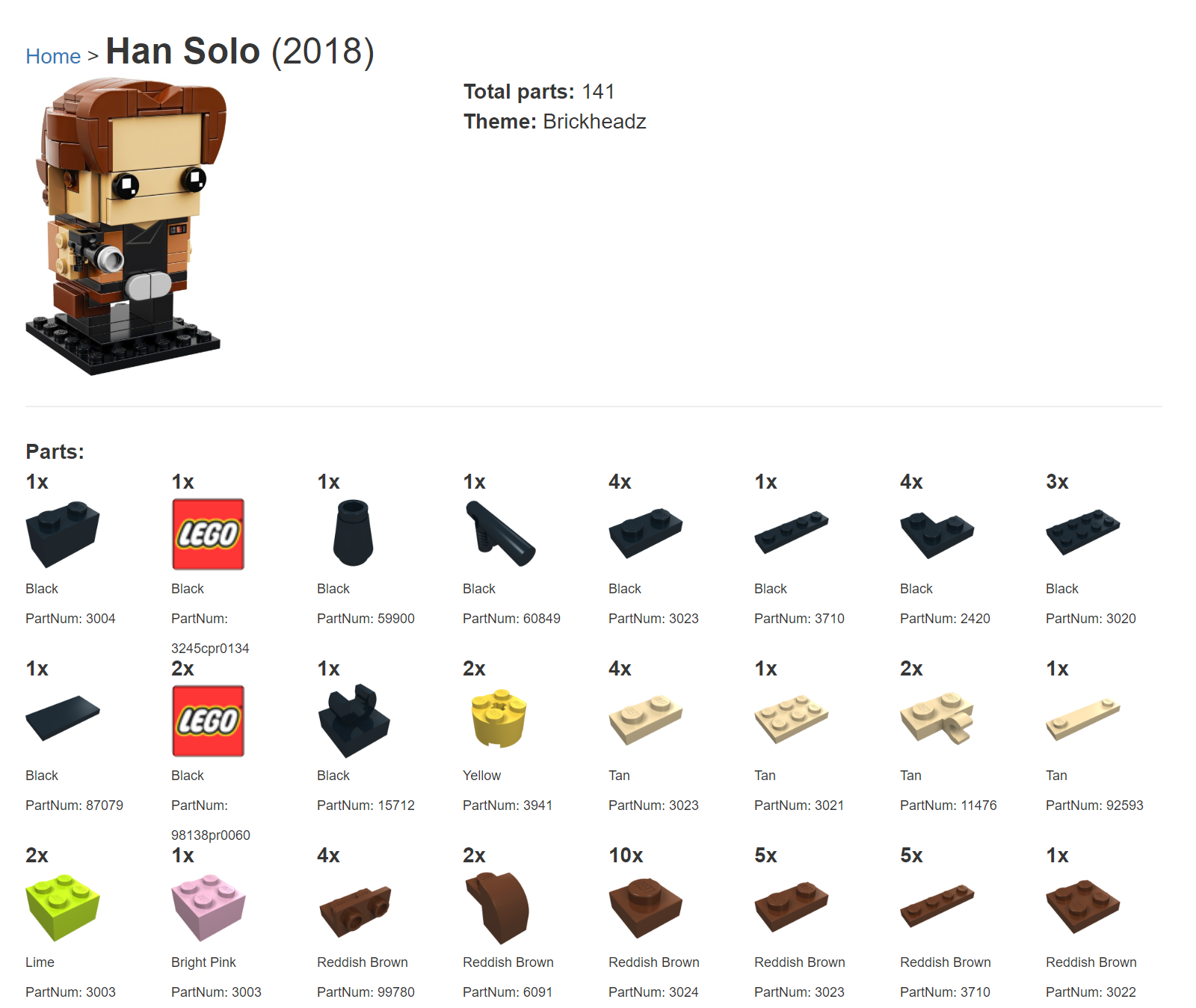

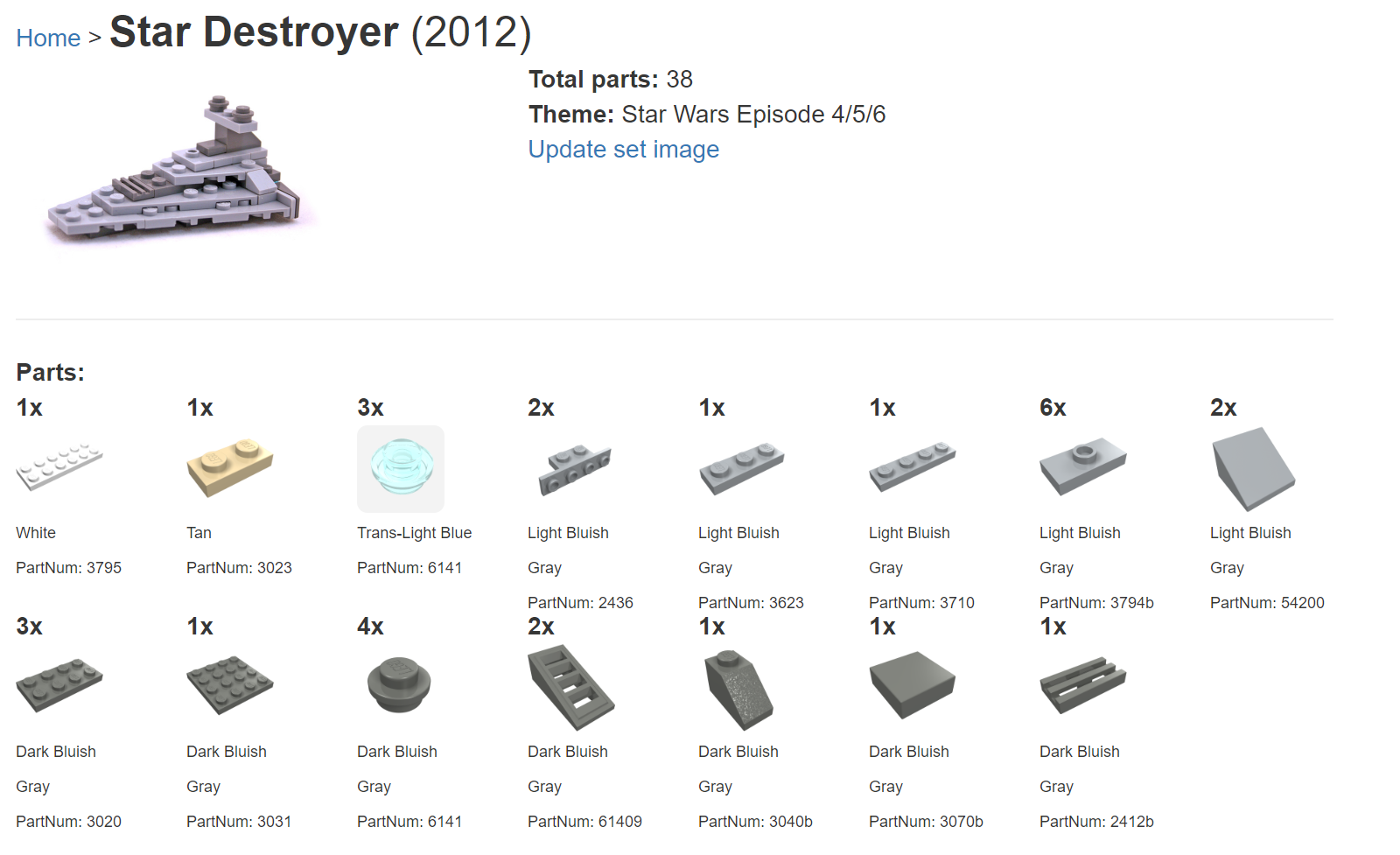

Today we are going to start using cognitive services to enhance our solution. We will start simple with just one cognitive service this week, and more over time, including a second one next week. First, we are starting with Bing Image search. The problem? Our sets don’t have a picture of the completed set, and there doesn’t seem to be a downloadable collection of these for us to use. For example, take the Han Solo set below, all we can see is the name, year, total pages, theme, and all of the individual parts.

The solution? We will take the model number, perform a Bing image search, load that image into a storage blob, and add a reference to this image in our database (to optimize the service calls to Bing image search).

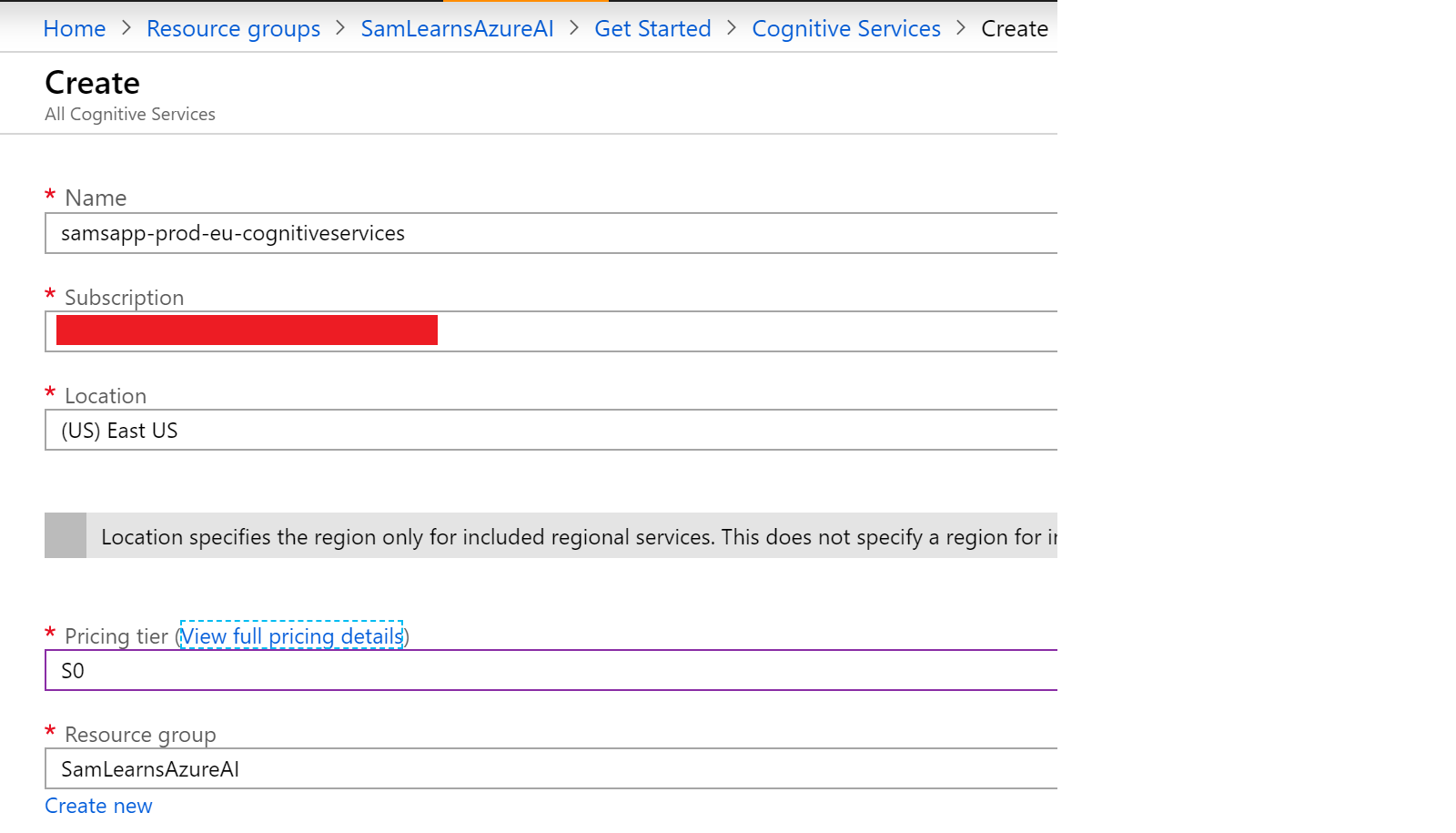

Creating the cognitive services item in Azure

First, we will add a cognitive services resource in Azure. Searching for “cognitive services”, we create the new resource, name it, assign it to a resource group, and select the S0 pricing tier.

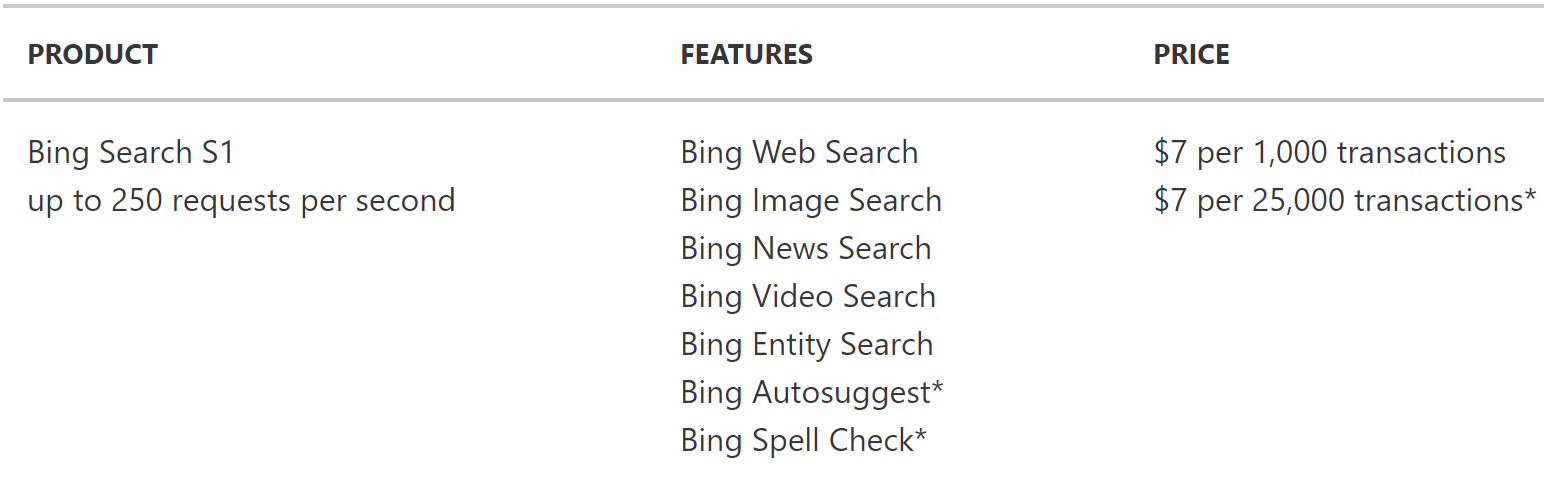

Pricing is pretty reasonable, especially at this scale, (we only have 5 products), with 1,000 API calls costing only a $1. We are only going to update images for models we are using, rather than populating the entire database (although we may do that later).

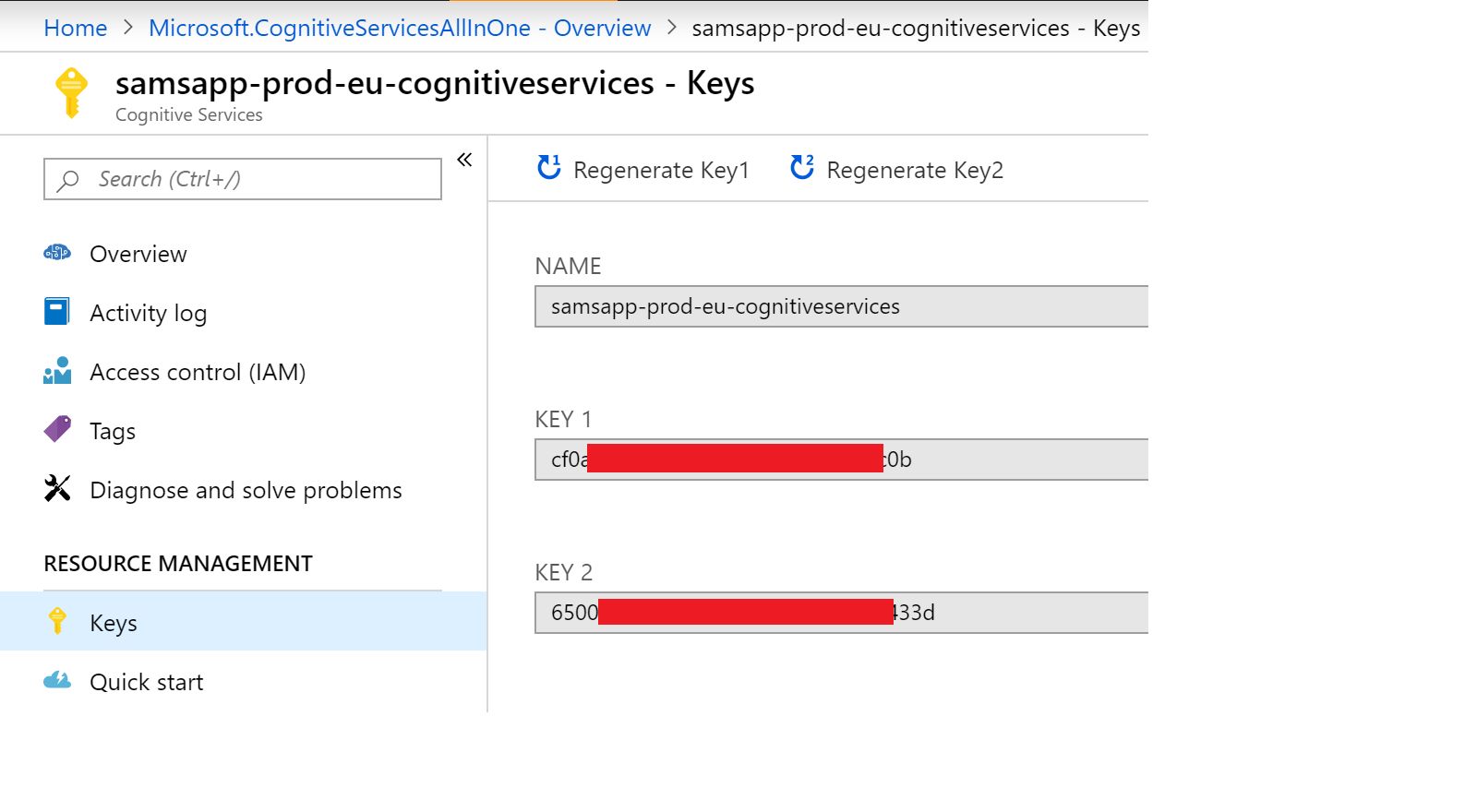

In the new Cognitive Services resource, we browse to the “keys” section, and make a note of the subscription key, which we will need in our code shortly.

We also make a note of the cognitive services base url for our region – we deployed this cognitive service in East US:

https://eastus.api.cognitive.microsoft.com/bing/v7.0/images/search

Now we call the bing api, using the subscription key, our base url, and some parameters to search (“q”), and using the “safesearch” parameter to ensure we only return “safe” results. The rest of the function needs some processing to return the search results.

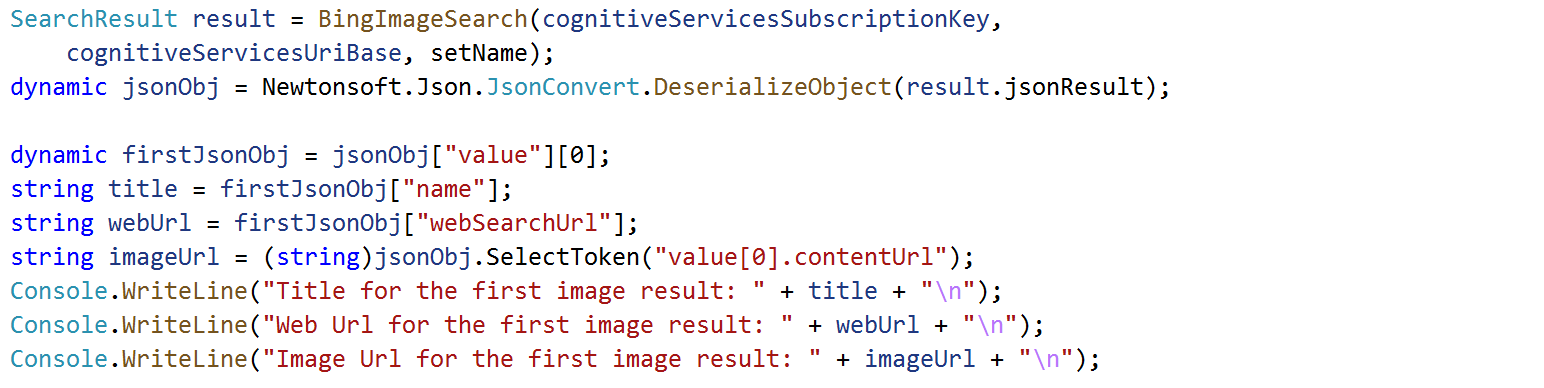

We call our function, and process the result, getting a image url to the new image. We download this image, save it to a new container in our storage account, and then save the name of the image in a new “set_images” database table, so that we don’t have to do searches over and over.

We incorporate this code into our web service. Now when the web page loads, it checks if the image is in the database. If it finds the image, we display it, if we can’t find one, the service searches Bing, downloads the image to our storage, and then displays a new image. The result for Han Solo is shown below:

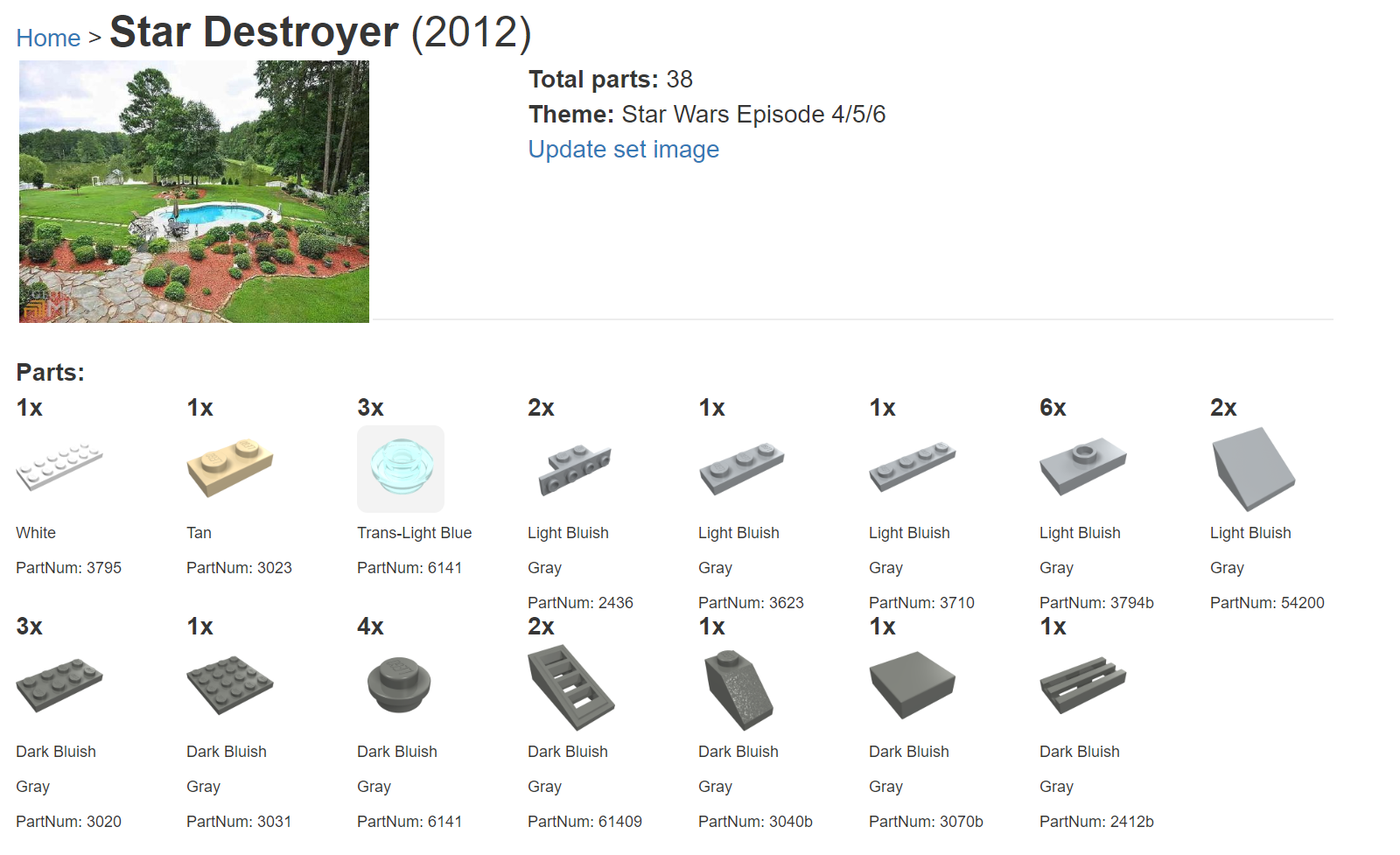

It looks pretty good… except for some of the models, it displays a false positive. The picture below is clearly not a “Star Destroyer”…

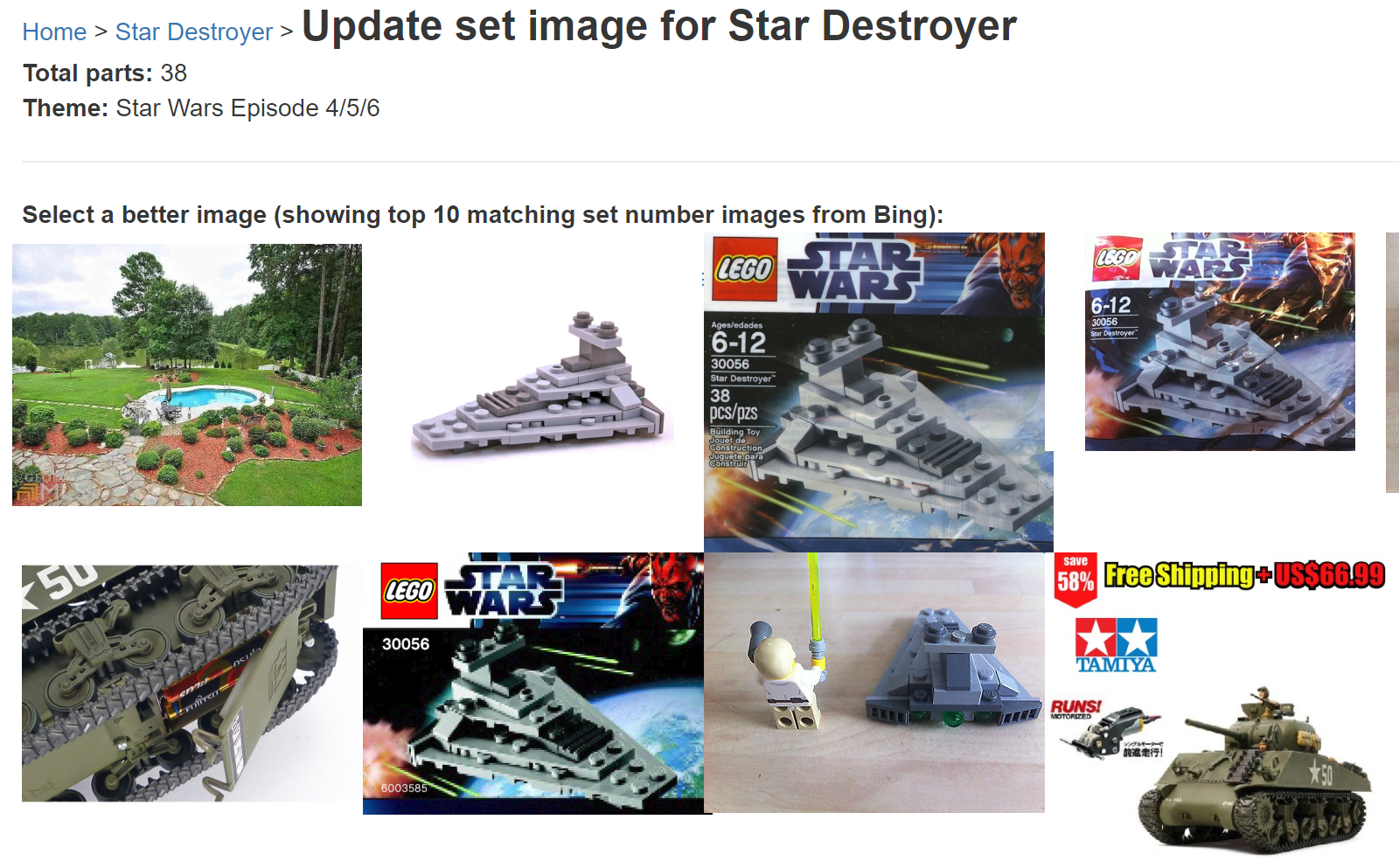

Creating a page to resolve false positives

To correct this, we create a new web page that displays the first 10 images returned from Bing search, allowing us to select the image we think is best. In this case, the second image is exactly what we are looking for, so we can select that, triggering our application to download this image to our storage account and database.

Wrapup

Our set page now looks great. We’ve used a simple cognitive service to automate a boring manual search process.

There is still more we can do, next week we are going to apply a second layer of cognitive services to only display and select images that contain Lego.

References

- Bing image search documentation: https://docs.microsoft.com/en-us/azure/cognitive-services/bing-image-search/quickstarts/csharp

- Bing image search pricing: https://azure.microsoft.com/en-us/pricing/details/cognitive-services/?cdn=disable

- Featured image credit: https://www.drupal.org/files/project-images/cognitive.gif

2 comments