Today we are going to add high availability to our website with Azure Front Door, a load balancer. This will enable us to scale load over multiple regions, and continue to provide service in the event of a major region incident. We are going to build a new environment for this test, creating two new websites, “Team blue”, and “Team green”, with background color coding to match and assist with our testing. We will integrate it with our main website later.

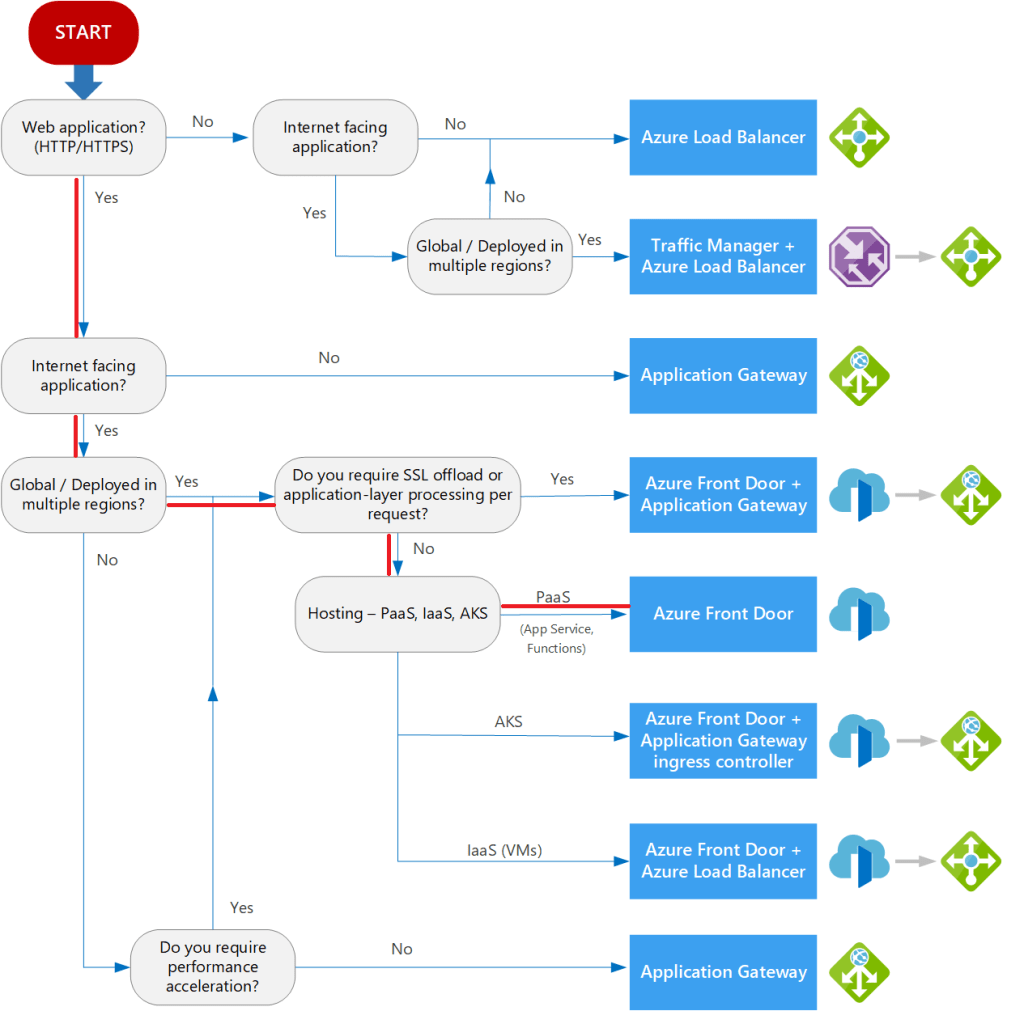

Choosing the best load balancing solution for our project

There are a number of different load balancing options. To choose the best one, Microsoft docs provide a useful flowchart on the overview of Azure load balancing options page. Let’s move through the choices together, following the red in the diagram below:

- Web application? Yes.

- Internet facing application? Yes

- Global/ Deployed in multiple regions? Yes

- Do you require SSL offload or application-layer processing per request? No. We do not need special routing

- Hosting – PaaS, IaaS, AKS? Yes – PaaS.

Conclusion: The best service for our internet facing PaaS web application, is Azure Front Door.

What is Azure Front Door?

Azure Front Door is a global load balancing service. When we add the resources to a resource group, if that region has an outage, the Front Door will continue to work globally. We start by creating two web apps in the same region – later we will create web apps in multiple regions, but having them in the same region helps us test.

Creating our Front Door service

Now we can create the Front Door service. In Azure we create a new resource group, and then add a new Front Door resource:

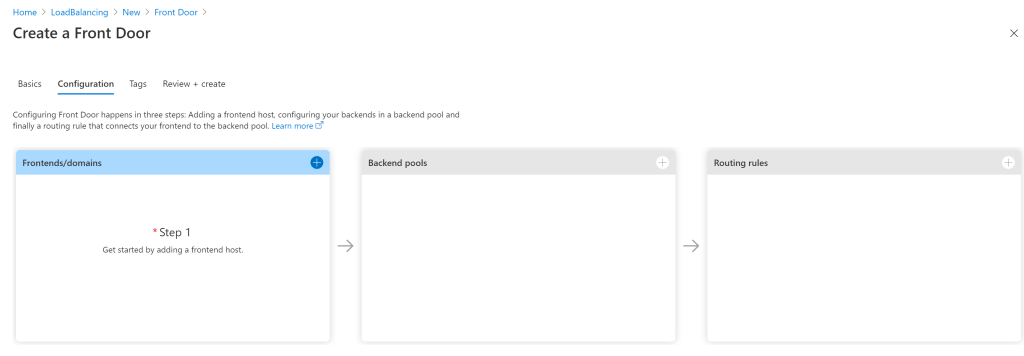

On the next tab, we are presented with three areas to configure:

- Frontends/domains: The entry point or url that users will enter into the load balancing service

- Backend pools: The resources that are shared, in our case, web apps

- Routing rules: Any custom routing rules. We aren’t going to have any custom rules, but still need to set defaults.

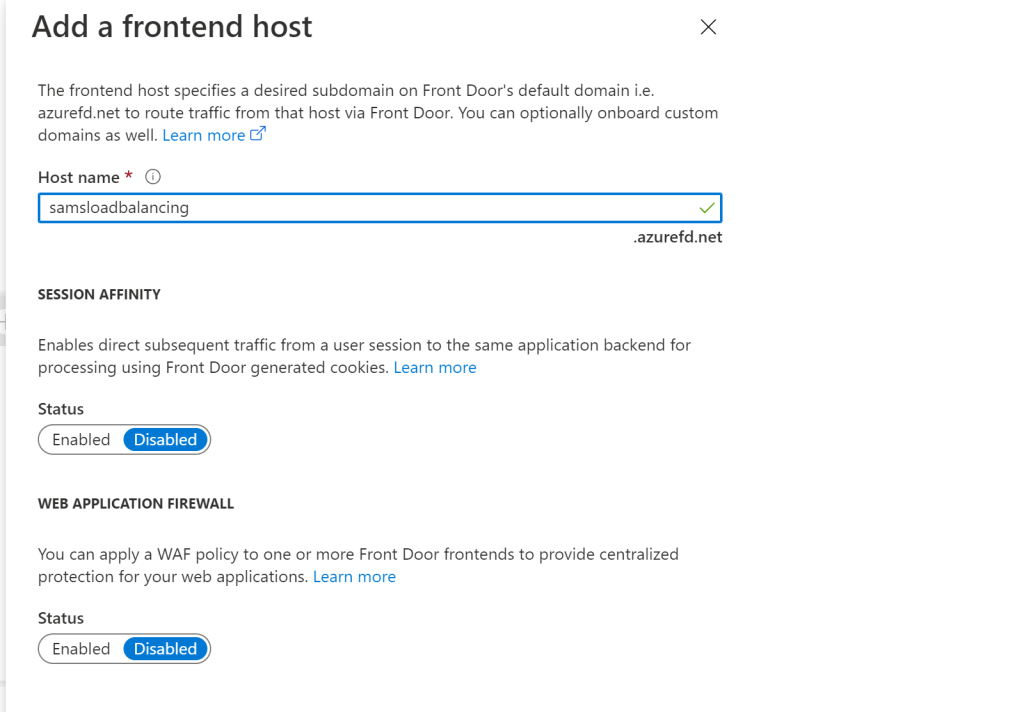

Configuration of the frontend host only requires a name that we will use to access our Front Door services. The rest of the values we leave to defaults. Note the first radio button is around session affinity, which redirects users to the same backend host to maintain session state. We are leaving this in the default value of “disabled” as our website is stateless.

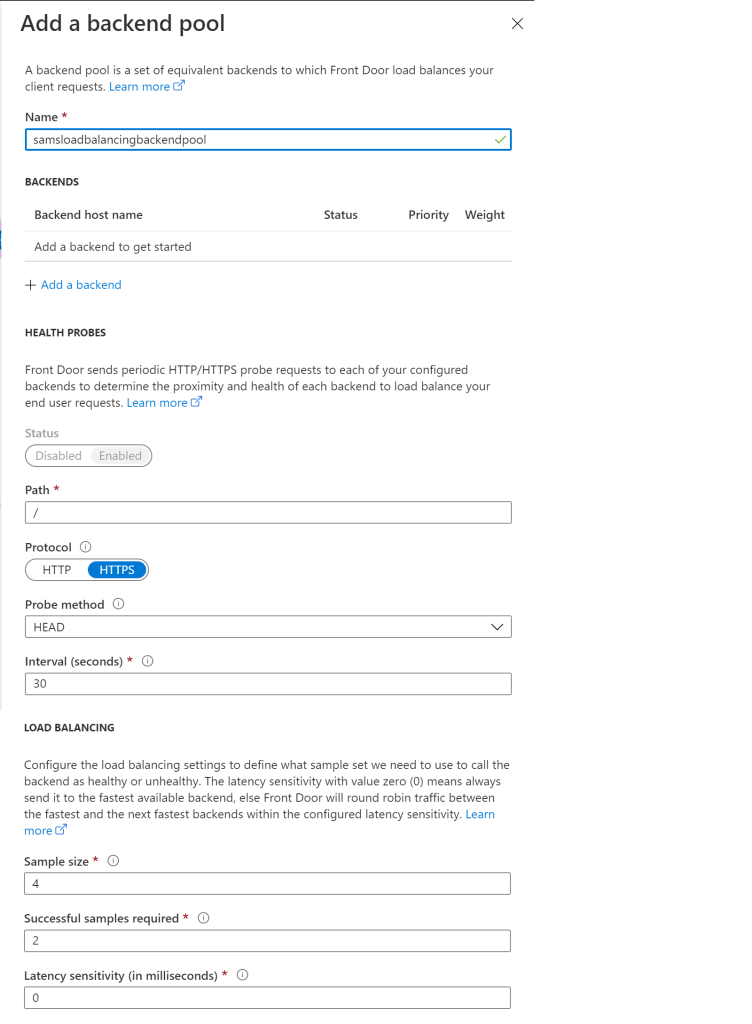

In the backend pools, we first to name it. We will add the backend web apps shortly, but first, there are two sections we will look at briefly.

- The “Health Probes” section allows us to look at backend hosts to determine if the app is healthy and to determine frequency to check the latency from the Front Door service to the apps. We’ve left this in the default values.

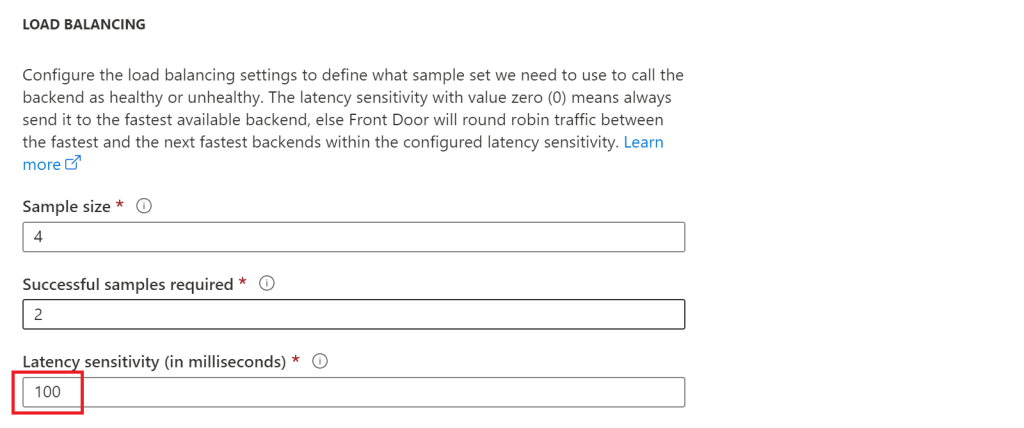

- The “Load balancing” section, shows the sample size, number of successful responses, and latency sensitivity, all of which we leave as default values for now.

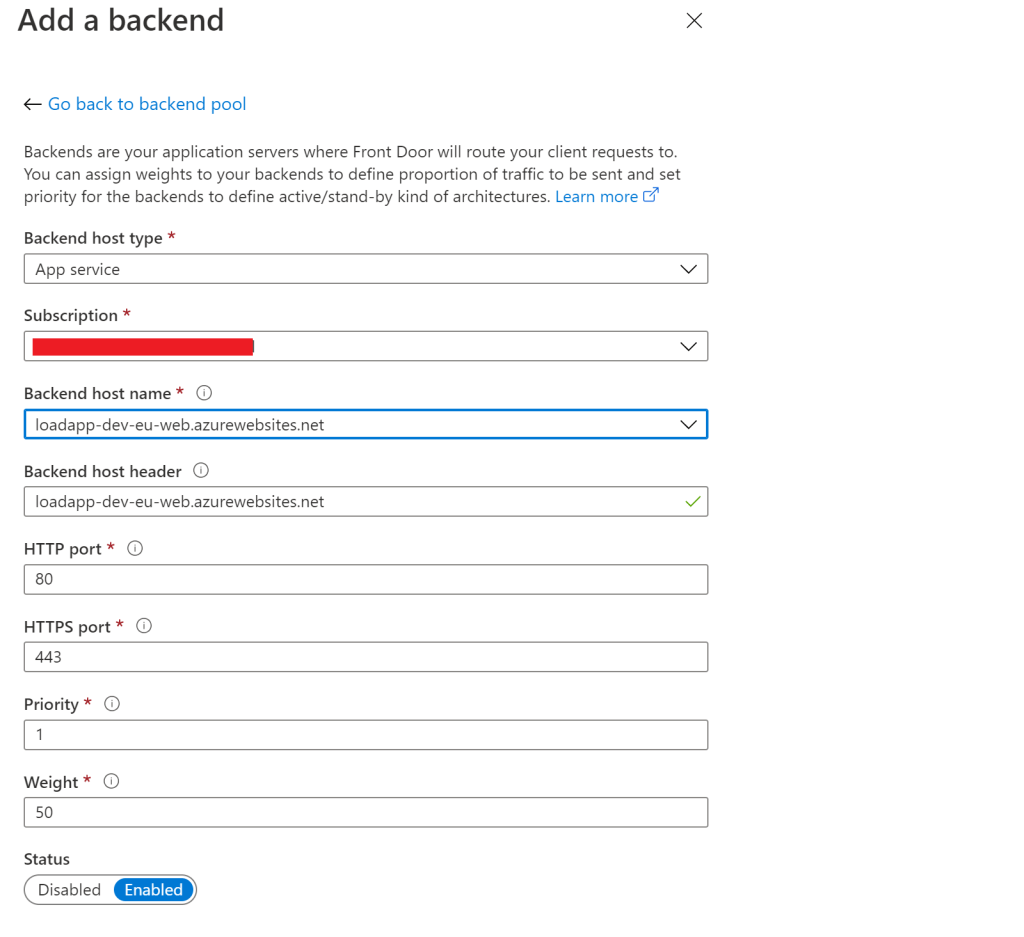

Now we are ready to add a back end. Here we select “App service” in the Backend host type, our subscription, and then the web app we want Front Door to redirect too. We do this for both of our new web apps. We leave all of the other values in their default – but of note is the priority and weight, which after latency, are used to help decide which web app should be redirected too.

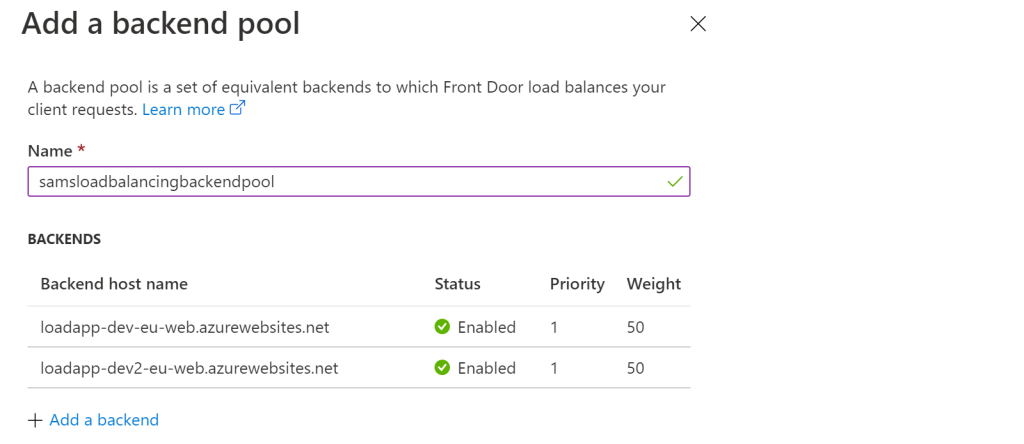

With both backend pools setup, we can see they are both enabled and have the same priority and weight.

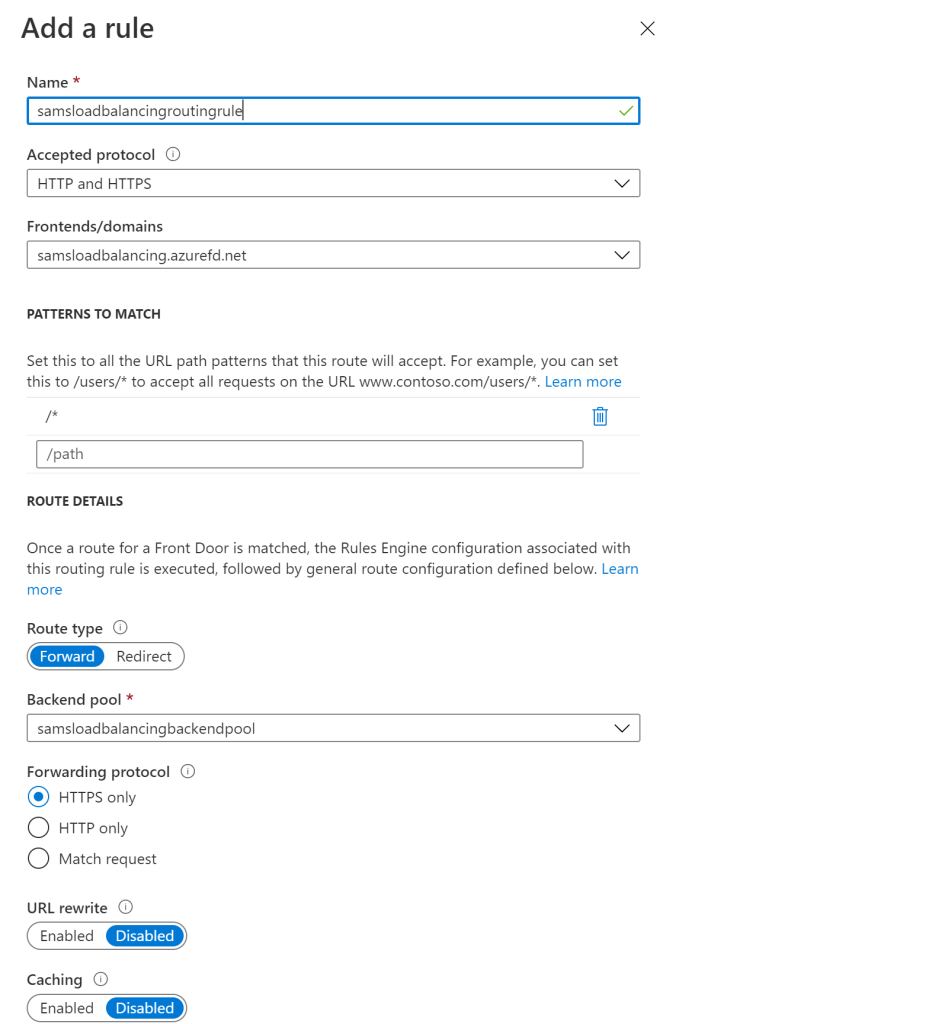

Now we add the routing rules. We aren’t going to customize these, except to name them and select the backend pool.

We can now complete the Front Door service creation.

Optimizing Front Door

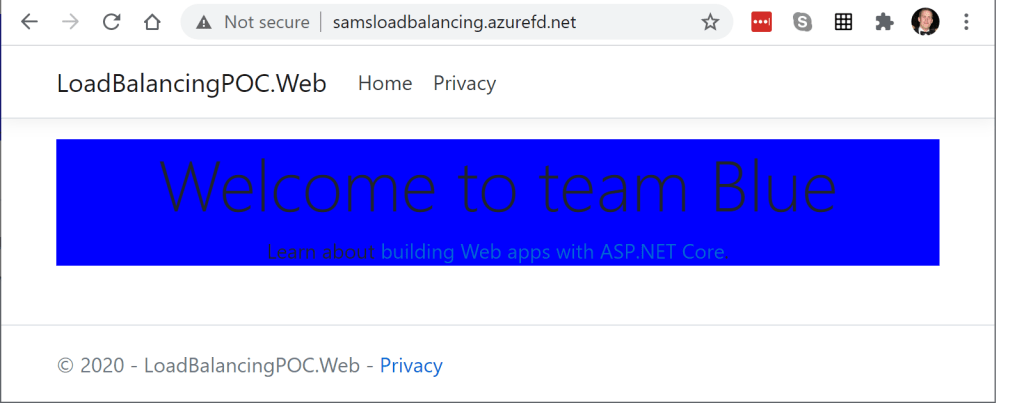

Let’s browse to the end point, (http://samsloadbalancing.azurefd.net/ – [note that as we’ve removed this front page instance, the link doesn’t work anymore]), to see what happens. Remember earlier when we said that we have deployed two web apps? After creating both web apps, we modified each one to have a green background or blue background, to make them easy to identify. We browse to the end point and see this “team blue”:

When we refresh the page, we should see it switch from site to site roughly 50% – but wait, we only see “team blue”… What is going on? Didn’t we set the priority to 1 and weight to 50%? Let’s look a little deeper about what is happening. By default, Front Door uses the latency from the Front Door endpoint and the backend resources, before it accesses the fastest resource. What if “team blue” is always faster than “team green” by 10-20ms? We will never direct the load to “team green”. We can help this by editing the latency sensitivity in the backend pool. First, lets check at what the latency is between the web apps and Azure Front Door. We can test this by logging into the KUDU console of each of our web apps and running the “tcpping” command against the load balancer.

tcpping samsloadbalancing.azurefd.net

In this case, even though both web apps are in the same region, one has a ping of about 45, the other 50. There is some variability, as we run it a few times, but one web app, (team blue), is usually lower than the other (team green). Essentially this means that the team blue backend web app will be used a lot more than the team green web app.

We can address this by configuring the latency sensitivity in the back end pools. By default it’s 0, where it always takes the fastest connection. If we configure ours to 100ms, Front Door will look at all resources within this range, and then use the priority and weighting we’ve already configured to spread the load out 50/50.

We run our test again, and note that it now switches between “Team blue” and “Team green” roughly 50% of the time.

Custom Domains

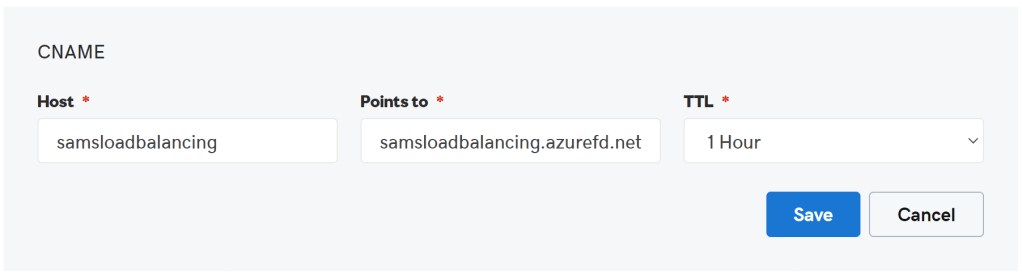

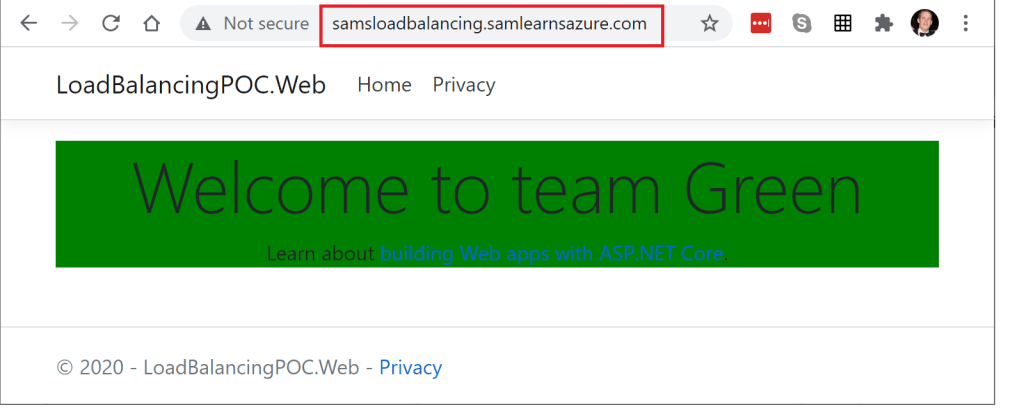

Finally, we are going to add a custom domain – so that our users can browse to our website with a friendly dns, instead of the ugly Front Door address. We start by creating a CNAME in our GoDaddy account, so that the DNS “samsloadbalancing.samlearnsazure.com” will redirect to our Front Door end point “samsloadbalancing.azurefd.net”

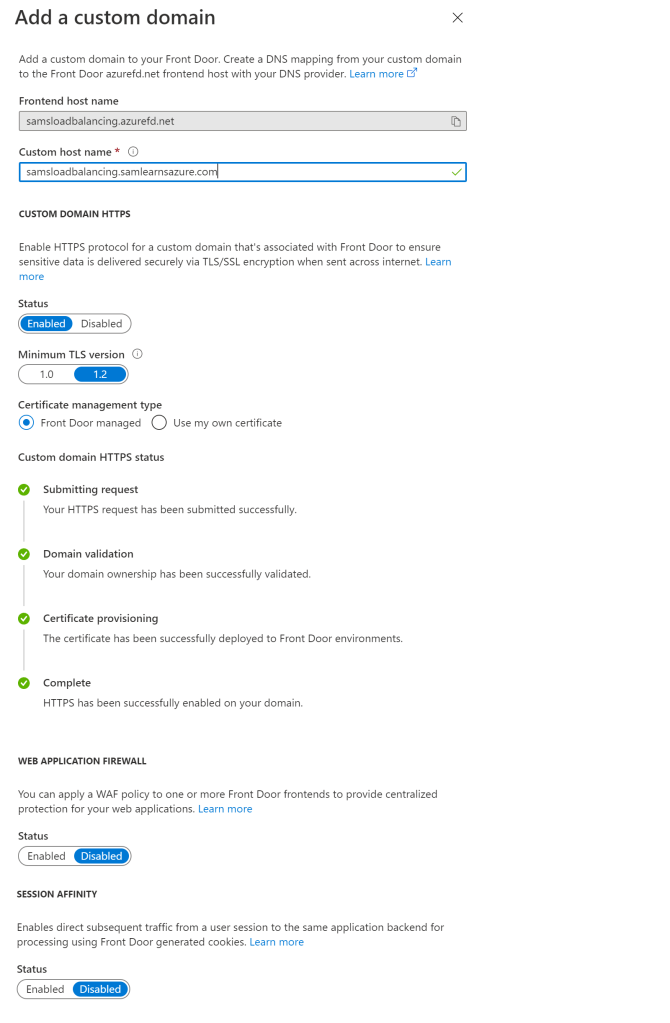

We need some time for the DNS setting in GoDaddy to replicate, so this is a good time to get a cup of tea. With our new cup of tea, in Front Door, we can add a new front end custom domain, using the CNAME host we just configured, “samsloadbalancing.samlearnsazure.com”. We also enable the “Custom Domain HTTPS” radio button. We have two choices here, to use our own certificate, or the managed Front Door option. We choose the Front Door option, and after some time, the certificate is setup and added to our service.

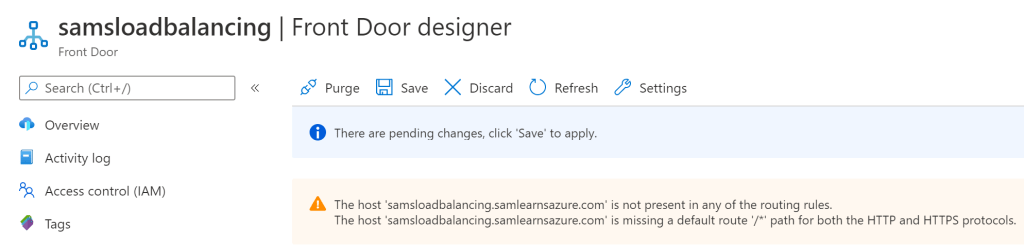

We save and are immediately prompted to fix our routing. Let’s have a closer look

Opening the routing rules, we need to ensure we have enabled routing rules to also include our custom domain.

With the routing and domain configured, we try out the new domain, and it works perfectly – except for one thing, the certificate on the back end. Due to some Front Door limitations on CA availability, we cannot use Let’s Encrypt for the Front Door certificates. Due to cost reasons, we are going to leave this unresolved, but typically we would create a wildcard certificate for the back end web app to allow our resources to flow easily.

Wrap-up

Today we created a high availability load balancer in Azure – and even though it was all infrastructure, it wasn’t too complex. The next step for us is to generate the ARM templates to configure this and integrate it in the main project. This will take some time, as we plan to also re-architect the overall solution to work better with this and upgrade the architecture to support multiple region failover.

References

- Load balancing options: https://docs.microsoft.com/en-us/azure/architecture/guide/technology-choices/load-balancing-overview

- Azure Front Door overview: https://docs.microsoft.com/en-us/azure/frontdoor/front-door-overview

- Azure Front Door routing methods: https://docs.microsoft.com/en-us/azure/frontdoor/front-door-routing-methods

- .NET page using Front Door to run 50% of the traffic to .NET 5 and 50% to .NET 3.1: https://devblogs.microsoft.com/dotnet/announcing-net-5-0-preview-2/

- Front Door CA availability: https://docs.microsoft.com/en-us/azure/frontdoor/front-door-troubleshoot-allowed-ca

- Front Door diagnostics: https://docs.microsoft.com/en-us/azure/frontdoor/front-door-diagnostics

- Featured image credit: https://www.ctl.io/assets/images/blog/load-balancing.png

Very well written and explained, I actually enjoyed reading this.

LikeLiked by 1 person