The importance of shifting left

We all know that automated testing is a key component of a successful DevOps practice. However, it’s also common for organizations to underestimate the change effort required to create a complete testing strategy. In this blog post we are going to demonstrate the advantages of creating a clear automated testing strategy, in three simple steps.

We often hear comments such as “We don’t need unit tests, we have UI tests” or “Our automated tests need 8 hours to run”. In DevOps, our automated testing goal is to have the capability to regression test as much of the product, as early in the development process as possible. The cornerstone of this strategy, is often referred to as “Shifting Left”: if a developer can spot a defect while actively developing a feature, it is significantly cheaper to resolve, compared to discovering that defect later in the process. We can see this in the diagram below, showing cost over development time.

Step 1: define automation tests

An important first step for your automated test strategy is to define the test types, their function, and the rules around them. This naming is almost always controversial – the names used here are for demonstration, we recognize that everyone will decide on a different naming structure. As there are many different definitions for test types, collaborating with your team to document and agree on these rules will ensure the team is on the same page with the goals and purpose of each test. The simplest automated test strategy should have at least three test types:

- Unit tests: These tests typically run in milli-seconds, with no dependencies, (e.g. not calling web services or SQL databases), often using a mock framework. Unit tests can run in any layer or technology of your application, from JavaScript, C#, to SQL.

- Integration tests: Tests typically run in seconds, and involve limited dependencies, such as web services or SQL databases

- Functional tests: Tests typically run in minutes, against the most of, if not the entire, tech stack, and often include complete end user scenarios or workflows, such as opening a webpage in the UI, or querying API end points. These tests see the greatest variation in naming, and are sometimes referred to UI tests, acceptance tests, load tests, security tests, smoke tests, etc. Since they don’t always use a UI, I prefer functional tests.

| Test type | Typical time to run | Example frameworks | Example |

| Unit test | Milli-seconds | MSTest, NUnit, XUnit, Jasmine | testing a function within a class, confirming the result of a + b = c |

| Integration test | Seconds | MSTest, NUnit, XUnit, Jasmine | testing a function that calls a SQL database with parameters a and b, confirming the database returns c. Often incorrectly called unit tests. |

| Functional test | Minutes | Selenium, JMeter | with automation, open a web page, enter inputs a and b, trigger a button click to start the calculation, and after some waiting/ processing, confirm the result displayed is correct. |

There will be other types of tests we will want to include in our strategy, such as load tests, performance tests, security tests, smoke tests, and acceptance tests. However, when adding these additional test types, be sure these have a purpose and a goal to improve our DevOps process, and aren’t present to tick a box.

Step 2: define when to use each test type

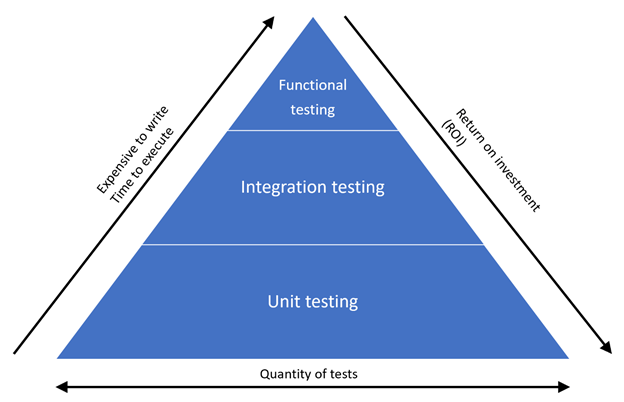

Once we have our test types, a recommended test strategy is to use the “Test Pyramid” to ensure we have the right distribution of tests. Fundamentally, when writing a test, we should always ask “Can we address this with a unit test?” If we cannot, we try to address it with an integration test”, and as a last resort, we use a functional test. This strategy is simple, and we can see the benefits of this in diagram below, a unit test is cheaper, less expensive to write, and executes faster – hence, we should have more of them.

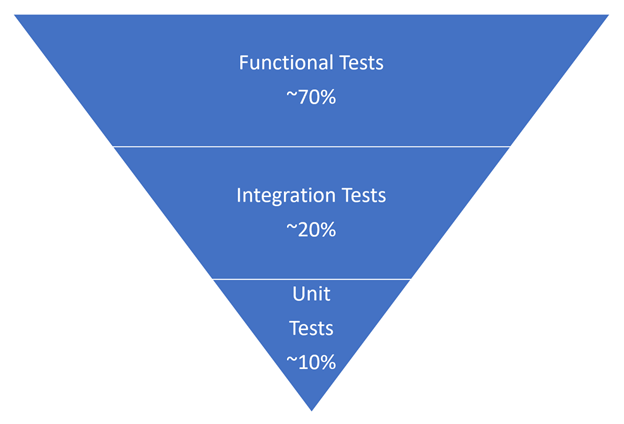

When organizations first started to automate tests, it was logical that they would try to automate what their QA staff were already doing, using tools such as Selenium and JMeter. Unfortunately, this creates a testing strategy sometimes called the “Ice cream cone”. In the “Ice cream cone”, shown in the diagram below, most of our tests run directly on the UI. While these tests are functional, they are very expensive, tend to be relatively slow compared to unit tests, are time consuming to write, are ‘flaky’ and/or unreliable, and can’t be run until late in the development process (usually after a deployment).

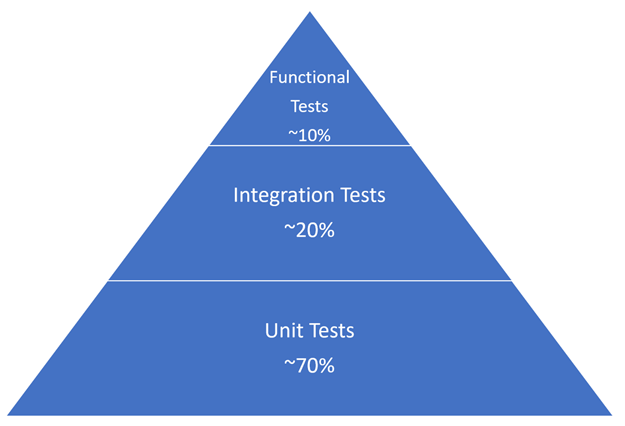

Contrast this with the recommended pattern in a “test pyramid”. Note that we can test the same functionality, we are just heavily weighted our tests in unit tests instead of functional tests. Note that the percentages will vary with every project – it depends on the end product and technology used. For example, some websites might have more functional tests than a micro services project.

Step 3: Create test allocation goals

Using some assumptions of run times, (these are broad strokes), we can calculate the total expected time for our tests in each of the different strategies. For example, let’s assume a functional test needs a minute to complete, an integration test needs a second to complete, and a unit test needs a millisecond to complete. If we have 500 tests in our project, we can calculate the total expected time for our tests in each of the different strategies. Note that these numbers are conservative, it’s not uncommon for functional tests to take several minutes, integration tests to take tens-of-seconds, and for unit tests to take tens-of-milliseconds.

| Ice cream cone | Number of tests | Timing |

| Functional tests (1 min per test | 350 (70% of 500 tests) | 350 minutes (5 hours, 50 mins) |

| Integration tests (1 sec per test) | 100 (20% of 500 tests) | 100 seconds (1 minute, 40 secs) |

| Unit tests (1ms per test) | 50 (10% of 500 tests) | 50 ms |

| Total | 5 hours, 51 mins, 40 secs, 50 ms |

| Test pyramid | Number of tests | Timing |

| Functional tests (1 min per test | 50 (10% of 500 tests) | 50 minutes |

| Integration tests (1 sec per test) | 100 (20% of 500 tests) | 100 seconds (1 minutes, 40 secs) |

| Unit tests (1ms per test) | 350 (70% of 500 tests) | 350 ms |

| Total | 0 hours, 51 mins, 40 secs, 350 ms |

You’ll note the difference timing of approximately 5 hours and how important having the correct allocation of tests makes to overall testing time. This is an example of just 500 tests, imagine this scaled out in larger projects – we have seen many projects with Selenium tests that run for 24-48 hours, when a more balanced test strategy would see numbers closer to an hour or two for a full regression test. Azure DevOps itself, has over 70k of automated tests that run in 10-15 minutes.

When using the test pyramid strategy, note that 90% of the application can be tested in less than 2 minutes, with the bulk of this time made up of unit tests, which are usually run in Visual Studio. These unit tests are so quick and short, a developer can use a feature such as “Live Unit Testing”, to get near instant feedback as they write code. This is real “shifting left”, and helps our developers be more confident that are producing quality code, before it is deployed to users.

Next steps

Ultimately, every team has a different set of resources, tools, and processes to address within their DevOps process. Spending a short amount of time defining the test types, strategy, and allocations, will put your team ahead of the competition. Having an unbalanced test allocation can be painful, expensive, and slow to execute. Having a good test allocation, helps to create a high-performance DevOps process, and deliver superior value to your end users.

2 comments